You’ve possibly heard rumors about the bizarre bird-type creature Momo who has apparently frightened kids and encouraged them to commit suicide. Some people claimed that Momo was edited into YouTube episodes of toddler’s favorite, Peppa Pig, scaring the youngsters and their moms. Although there is little proof to thee claims, this has once again placed YouTube in the spotlight. People have questioned whether YouTube is a safe place for kids to hang out. Recently, YouTube has reacted to its detractors, restricting comments on videos targeting children, limiting monetization, and canceling some channels. But while the new policy may be reassuring for parents, it is not so good for creators and influencers in the kids’ sector.

Summary: Quick Jump Menu

- YouTube Comments Can be Truly Vile

- YouTube Came Under Fire for Recommending Videos with Inappropriate Comments

- Some of the Videos Were Also Inappropriate

- A Year On, Little Had Changed

- One Problem is Context

- YouTube Finally Bans All Comments on Children’s Videos

- Impact on Influencers and Creators

- YouTube Has Announced an Exception to its Rules

YouTube Comments Can be Truly Vile

Like most forms of social media, YouTube caters to a full cross-section of society. It is also relatively anonymous. You can generally leave comments on videos, knowing that you will never meet the filmmaker face-to-face.

Some people take advantage of this freedom and leave bullying, hurtful, and totally inappropriate comments, which they would never have the courage to say in person. Sure, you have always been able to report comments you believe inappropriate, but most people don’t. And it takes time for somebody to check these complaints and decide whether the claim is justified or not.

YouTube Came Under Fire for Recommending Videos with Inappropriate Comments

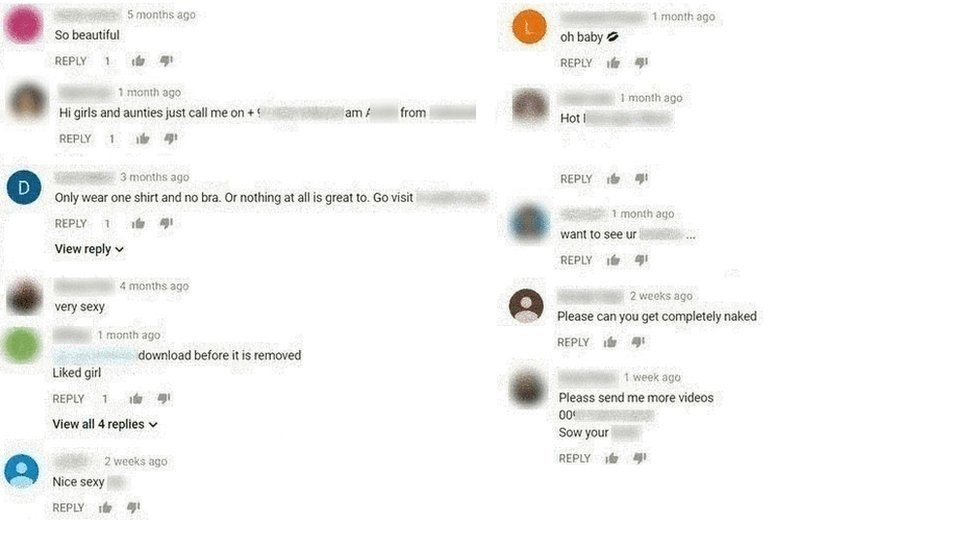

YouTube has received flak on several occasions for the way it handles videos that clearly target children. Just over a year ago, both the BBC and The Times carried out investigations into YouTube. The BBC discovered in November 2017 that YouTube’s systems for reporting sexualized comments hadn’t operated properly for more than a year. They indicated that there could be up to 100,000 predatory accounts who left lewd comments on videos.

The problem at that point was that if somebody reported inappropriate comments, the faulty tool stripped links to the comments under complaint. This made it difficult for YouTube’s voluntary group of moderators to act on complaints.

The BBC investigated and discovered that the comments in question were truly inappropriate. Some were highly sexually explicit, others included adult phone numbers and requests for sexual fetishes. Remember that these were comments left on videos that featured children younger than 13.

Some of the Videos Were Also Inappropriate

The Times investigation focused more on potentially inappropriate videos that YouTube permitted people to upload. To make matters worse, many of these videos included ads for big-name companies, presumably oblivious to where their advertising appeared.

Many of the videos were filmed and uploaded in all innocence by young girls. Some of the videos showed “young girls filming themselves in underwear, doing the splits, brushing their teeth or rolling around in bed.” The girls, of course, made and shared these videos as part of their everyday life. But a side effect was that pedophiles flocked to these videos, often leaving keywords in Russian to encourage others. Again, the video comments were totally inappropriate, even leaving links to child abuse, and requesting the children in the videos to perform various activities.

Many of these videos resulted in complaints from people using YouTube’s reporting system. However, members of YouTube’s “trusted flagger” program admitted that they were swamped in reports. This clearly wasn’t helped by the fault in YouTube’s reporting software.

The scandal even spawned a name – Elsagate, which Wikipedia describes as being “the controversy surrounding videos on YouTube and YouTube Kids that are categorized as “child-friendly,” but which contain themes that are inappropriate for children. Most videos under this classification are notable for presenting content such as violence, sexual situations, fetishes, drugs, alcohol, injections, toilet humor, and dangerous or upsetting situations and activities.” The first Elsagate videos feature Elsa from Disney movie, Frozen, and Spiderman, doing highly inappropriate activities together.

The Guardian even reports a James Bridle as saying, “Someone or something or some combination of people and things is using YouTube to frighten systematically, traumatize, and abuse children, automatically and at scale.”

YouTube announced a crackdown, bringing in a policy that prevented age restricted videos from being seen by users who are not logged in and those who have entered their age as below 18.

A Year On, Little Had Changed

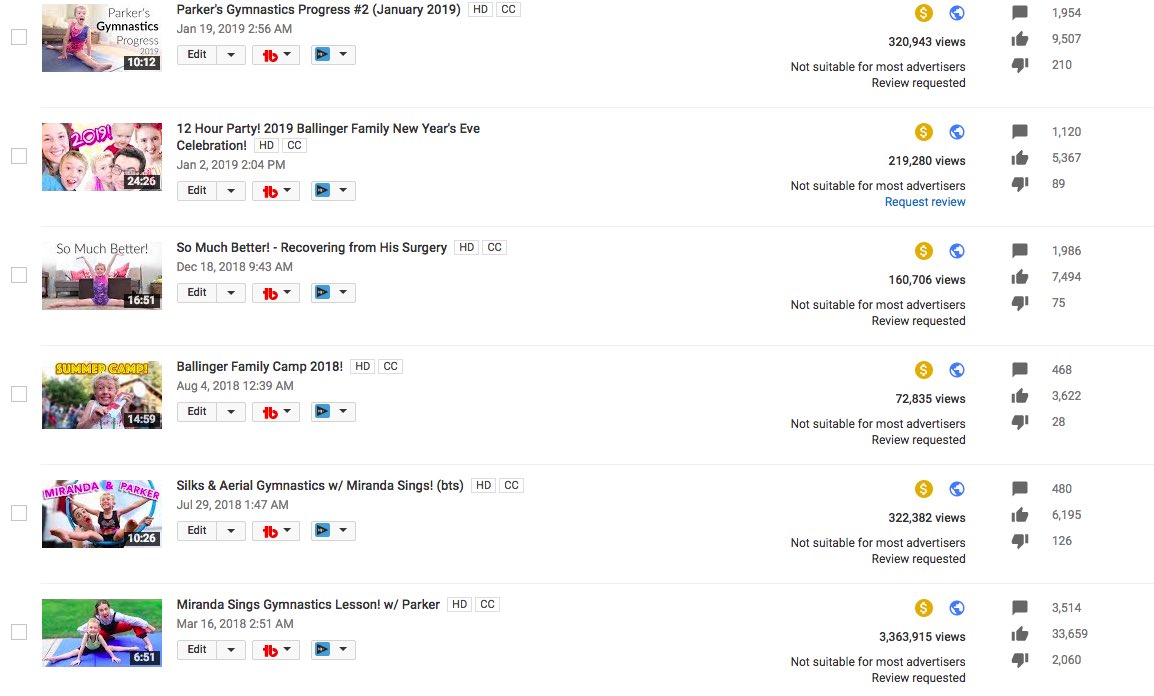

Fast forward a year, and people still found the same problem with children’s’ YouTube videos. Things came to a head in mid-February 2019 when a Reddit user called Matt Watson posted that he had discovered a wormhole into a soft-core pedophilia ring on YouTube. He states, “I can consistently get access to it from vanilla, never-before-used YouTube accounts via innocuous videos in less than ten minutes, in sometimes less than five clicks.” He also observed that the inappropriate videos carried advertising from firms such as McDonald’s and Disney.

Once you enter the “wormhole” every video in the recommended sidebar is more softcore sexually-implicit material. People consistently time-stamped sections of the video when the kids were in compromising positions. To make matters worse, these comments had the most upvotes.

Matt noticed that quite a few of the videos did have disabled comments, meaning that YouTube’s team had already flagged the videos – but they still left the videos online.

One Problem is Context

One of the issues YouTube faces is that many of the videos posted are not in themselves inappropriate. They’re often videos showing young girls going about their everyday activities. The problem is how some adults use these videos, taking them out of context. This makes things particularly tricky for YouTube. How do you explain to a thirteen-year-old girl that a video she made with her friends showing some perfectly innocent activity, for example, gymnastics, could be looked at darkly by some people?

YouTube’s recommendation algorithm has also come under fire. As Reddit user Matt Watson discovered, it only took a couple of clicks from his original click on two adult women in bikinis, before YouTube’s algorithm suggested videos of young girls demonstrating gymnastics poses, showing off “morning routines,” and licking popsicles. The algorithm seemed to assume he had dodgy intentions and almost appeared to “reward” him.

YouTube has already been pushed to modify its algorithm recently because of concern about its AI pushing viewers toward extremist and terrorist content. It also recently revised the AI to reduce recommendations for videos that “could misinform users in harmful ways, “including videos promoting fake miracle cures, claiming the earth is flat, or making “blatantly false claims” about historical events.

YouTube Finally Bans All Comments on Children’s Videos

YouTube responded more quickly to these latest complaints. It saw it had a public relations crisis and had to act. Its advertisers were extremely upset having their ads connected with such posts, and clearly put pressure on the video-streaming giant. Companies like Epic Games, Nestlé, and Disney were so concerned they pulled their ads from YouTube.

YouTube’s response has been to place a blanket ban on comments on videos featuring children. It has terminated 400 channels it felt deliberately broke YouTube’s rules and deleted tens of millions of comments on other videos. Some of the channel YouTube removed were probably accounts set up by children younger than YouTube’s official minimum age of thirteen.

YouTube says its algorithm is now “more sweeping in scope and will detect and remove 2X more individual comments.”

Impact on Influencers and Creators

On the surface, most influencers and creators will feel that YouTube tightening up their algorithms and eliminating inappropriate comments is an improvement on the current situation. After all, the problems weren’t related to accounts operated by influencers. The worst comments were either on channels set up by dodgy people or alternatively on channels run by ordinary children, unaware of how some people would react to their innocent videos.

However, the latest crisis, along with YouTube’s efforts to fix the problems, has rattled some influencers and creators. For example, it is causing problems for channels which upload videos of children playing with and reviewing new toys. There is a solid niche group of toy and gaming influencers, and the loss of the ability to comment is having a significant effect on their efforts.

Also, the definition “Child” seems somewhat fluid. Things could be particularly interesting in the gaming sector, where some videos of people gaming will continue to allow comments, but others won’t – seemingly depending on how youthful the gamer looks.

The withdrawal of advertising affects all monetized YouTube channels. The fewer firms advertising, the less money there is to be shared among YouTubers.

YouTube is trying to better flag channels it believes are inappropriate for its ads. This doesn’t always go down well, though. As one mother complained vis Twitter, “MY 5-YEAR-OLD SON: does gymnastics and is a happy, sweet, confident boy. @youtube: NOT ADVERTISER FRIENDLY”

Many parents whose kids have made good incomes form their haul videos are concerned that they have lost monetization opportunities through no fault of their own. They feel that YouTube is penalizing those channels that produce G-rated content for kids, usually without any problem, more than they are punishing anybody else.

YouTube Has Announced an Exception to its Rules

YouTube does still provide an opportunity for legitimate channels that feature children to allow comments. According to a YouTube release, “These channels will be required to actively moderate their comments, beyond just using our moderation tools, and demonstrate a low risk of predatory behavior.”

However, this does require intense labor-intensive moderation. This could be well beyond the resources of many influencers and popular channels. It will be a near-impossible imposition for the parents of many popular child-centric channels.

Want to find out how to find the best Instagram influencers for your target demographic? Head on over to SocialBook for a free demo and trial to find out how!